TL;DR

- Coding agents like Claude Code and Codex are impressive at software engineering, but they struggle with production data environments where bugs propagate silently through layers of interconnected business logic.

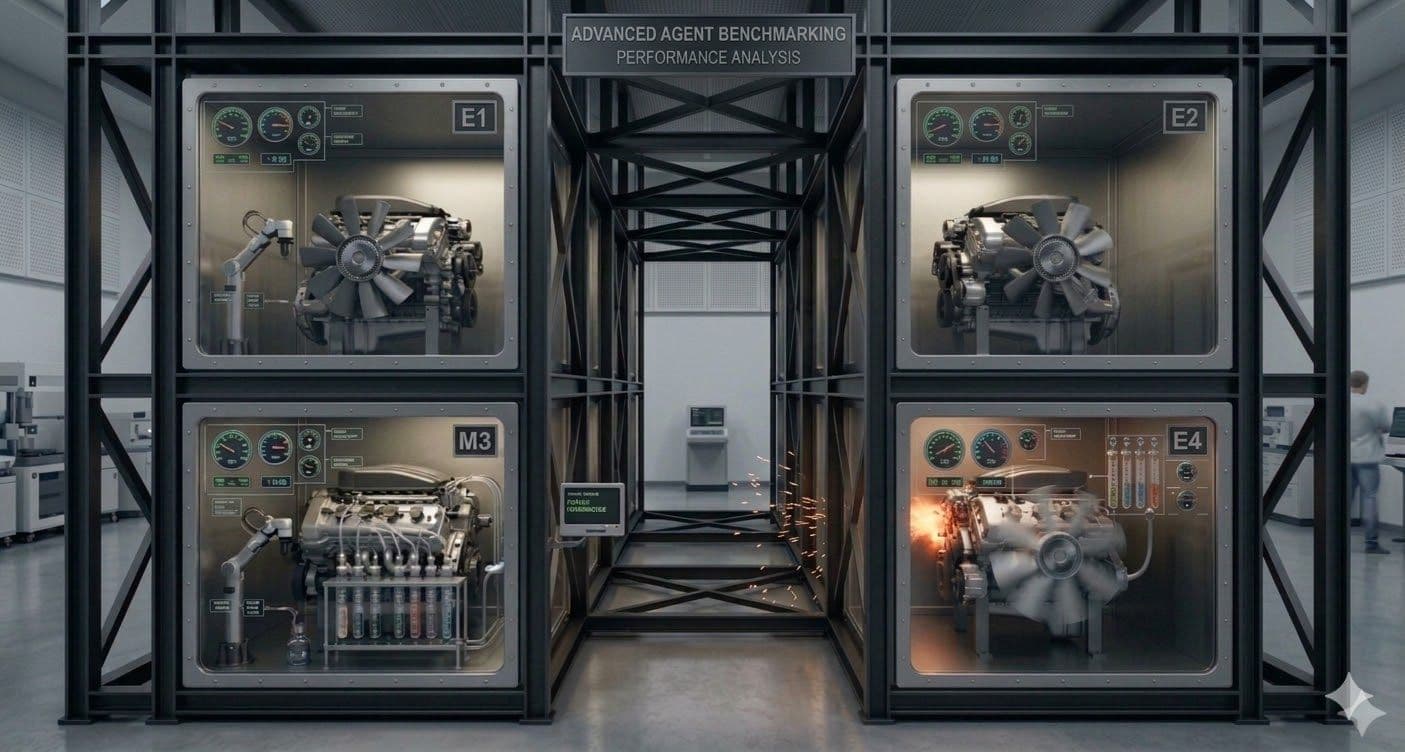

- We built a production-scale dbt project as a benchmark domain to evaluate how well agents navigate lineage, trace root causes, and fix realistic failures.

- Our data agent with lineage context scores ~80% on these tasks; vanilla Claude Code manages ~50%. That 30-point gap is the difference between grep-and-guess and genuine lineage reasoning.

- In this first post of the series, we introduce the need for a production-scale domain to measure cutting-edge agent capabilities, and how we built it.

Context Management: The Gap Between Code and Big Data

Coding agents have gotten remarkably good at software engineering. Give one a failing test and a codebase, and it can often trace the bug, write a fix, and verify it passes. But drop that same agent into a production data environment and it struggles.

Production software is often modular with well-defined contracts, so the agent can track the relevant information in its context. Production data environments on the other hand are difficult to navigate: they're large and have many components, from different sources, staging, and intermediate transformation layers to marts and various reporting tiers, and the number of relationships between these layers explodes combinatorially as the project grows. A bad join in a staging model silently corrupts revenue numbers three layers downstream. A tweak to an intermediate model to fix one BI dashboard breaks another BI tool's reports because of some implicit dependency on the grain.

The challenge isn't just the volume of connections, but their irregularity. Some relationships are explicit, like a ref() call or a foreign key test. Other relationships are implicit, only visible in how they manifest in reporting layers several hops away, or live in macros that abstract away the logic. Then there are the business rules that are purely semantic understanding, part of the data team's best practices and not captured in the SQL codebase at all. Making a change requires understanding not just the code, but the data contracts that connect each node in the graph, written and unwritten.

We set out to build the agent data teams are looking for: one with deep insight into the lineage, semantic relationships, and downstream impact of every change, regardless of your environment's scale. But to build a better agent, we needed benchmarks to define and measure its capabilities.

Why Build Our Own Benchmark?

To effectively build an agent, we practice eval-driven development. We define what your agent should be able to do before it can, then iterate until it gets there. Without a benchmark, improving an agent is guesswork. The performance is inconsistent: sometimes the agent succeeds, sometimes it doesn't, and you can't tell the difference between a real regression and noise. By testing your agent against a suite of scenarios you can replicate over and over, you build a concrete understanding of what problems your agent struggles with and how to improve it.

Designing a useful benchmark is its own discipline. Daneva's framework for software benchmark design lays out the steps: state the goal, identify constraints, select competitors, specify what you're measuring (domain vs. measurement), develop a measurement plan, interpret results, validate, and document. Our goal was specific: measure how well agents navigate lineage and trace root causes in complex, multi-layered data environments, not general SQL fluency or prompt interpretation. The next challenge was creating a domain that would challenge the agent.

What's Already Out There

There's been a wave of data agent benchmarks in the last year, each pushing the field forward in different ways.

DABstep (Adyen + Hugging Face) offers 450+ multi-step data analysis tasks derived from real operational workloads, combining structured and unstructured data. It's a strong test of analytical reasoning (even the best agents struggle at 16%). But its target use-case was more around general-purpose ambiguity across multi-modal data, not the kind of lineage-aware debugging we needed to evaluate.

Spider 2.0 (ICLR 2025 Oral) set an important precedent for environmental complexity. With 632 tasks across enterprise databases, some exceeding 3,000 columns, it pushed well beyond toy text-to-SQL. Its dbt variant (Spider2-dbt, 68 tasks) was a step toward the kind of repository-level reasoning we cared about, and its emphasis on real database systems and multi-dialect SQL established the pattern that later benchmarks followed.

DAComp (ByteDance, Dec 2025) and ADE-bench (dbt Labs) both follow the Spider 2.0 pattern, measuring agent performance in multi-layered data ecosystems. They're the closest to what we needed, testing real analytics engineering workflows with actual dbt projects and databases.

For our purposes, the environments weren't complex enough to stress-test lineage reasoning. ADE-bench's most complex project is around 60 models, small enough that a coding agent can read every file and brute-force its way to understanding. DAComp's data engineering tasks have an average of 32 tables with environments spanning tens of dbt files across staging, core, and mart layers. These are meaningful pipelines, but an agent with a large context window can still hold the entire project in memory and pattern-match its way through.

'We're going to need a bigger domain'

We needed environments where brute force doesn’t work, where the DAG is deep enough that tracing a bug from symptom to root cause requires genuine investigation, not just reading every file. That meant hundreds of models across multiple business domains, with enough cross-cutting dependencies that understanding one domain requires understanding its connections to three others.

No existing benchmark gave us either the environmental complexity or the eval-driven iteration loop we needed. So we built our own.

Defining a Benchmark

The domain: a production-scale warehouse

We used the medallion architecture with staging, intermediate/transform, and marts layers, feeding into reports and semantic views when designing our data model. We spent a lot of effort making the schema's scale and complexity rival that of a SaaS company, representative of what real data teams navigate, ending up with more than 260 dbt models, 120 macros, 90 config files, and thousands of files across our dbt project and BI layers.

Once we had our schema, we had to populate our tables with realistic data. We built state machines and seed generators to synthesize years of production-realistic data: user signups and upgrades, daily active usage patterns, license purchases and renewals, support tickets, sales opportunities, and more. This was an iterative process. The data's statistical attributes must emulate realistic events and follow the contracts of our schema as it progresses through pipeline stages, transforms into different time windows and aggregations, filling our data warehouse and BI reports.

Ironically, our data agent would have made all of this a simpler task! Alas, we hadn't built our agent yet so this was a very iterative process. We made assumptions on how the data was used, synthesized the data to populate the staging tables, and tweaked our data-synthesis code upon seeing the next layer of dbt build failures.

When we were finished, we had an enterprise-scale warehouse. We could inject bugs in a staging model and watch downstream effects ripple through real cross-domain dependencies. Tweaks to a CRM table in the intermediate layer could cause revenue reports to misalign with license data, changing server telemetry aggregations could break dashboards, and so on.

The measurement: 200 ways to break production

For each benchmark task, we introduce a specific bug (it could be a code patch, a data injection, or both) that violates a data contract encoded in the project's dbt tests. The tests we developed alongside the project provided a rich source of realistic breaks in our system, and a deterministic evaluation signal: if the agent's fix makes dbt test pass, it solved the problem. If not, it didn't.

The agent’s task is to fix the bug. We calibrate the task difficulty across four levels:

- Easy: the agent gets a prompt pointing at the failing test and the model where the bug lives.

- Medium: the injection moves upstream in the DAG, a hop or two away from the test that catches it.

- Hard: the injection is several layers upstream, and the prompt describes a downstream symptom rather than naming a model.

- Blind: the same prompt as Hard, with the test itself removed from the agent's workspace. There's no failing test to grep for. The agent gets only a business-user description: "The revenue dashboard looks wrong this quarter."

The larger the span of models between the failure injection and the point of discovery, the harder the task. This lets us measure not just whether an agent can fix a bug, but how well it navigates lineage under increasing ambiguity.

Writing challenging bugs in production environments: Choose a model with a dbt test, inject buggy code upstream, and choose a downstream view where the bug manifests to prompt the agent

The 30-Point Gap

We developed our data-agent against this benchmark, until it could reliably score 80%. Vanilla Claude Code (same underlying model, no lineage tools) manages around 50%*.

That gap isn't about the model being smarter. It's about what the agent knows. With lineage context, the agent can ask "what's upstream of this model?" and get an answer in milliseconds, instead of reading dozens of SQL files trying to reconstruct the DAG in its context window. It can check downstream impact before making a change. It can trace a column-level dependency from a revenue report back to the staging model where the join went wrong.

These numbers are a snapshot, not a finish line. While we improve our agent, we continuously iterate on the evals themselves, adding new failure scenarios, refining difficulty calibration, and building richer scoring dimensions. Eventually, we would add LLM-as-judge to get a broader signal on agent trajectories, and new tasks like data-analysis and feature planning to measure different capabilities and get more insight into the agent’s understanding of the environment.

* It's worth noting that vanilla Claude Code with better prompting and purpose-built tools does better than the 50% baseline; the gap narrows as you give a coding agent more domain-aware scaffolding. The benchmark's job is to measure exactly how much each improvement matters.

The Series Ahead

Building a benchmark at this scale taught us as much as building the agent itself. Reverse-engineering an unfamiliar warehouse to synthesize realistic data, designing failure scenarios that can't be gamed, running hundreds of isolated agent evaluations in parallel on Snowflake and Kubernetes, even using our own lineage agent to generate new tasks. Each of these turned out to be its own engineering problem.

In the next few blog posts, we’ll share more of our learnings and dive into our process for developing tasks efficiently, how we built the benchmark harness to evaluate agents in isolated workspaces at scale, and how developing new evaluations like LLM-as-judge facilitated data analysis and feature tasks.

If you're building agents for data-heavy domains, or evaluating an agent beyond a toy benchmark, keep an eye peeled! We think there's something useful here for you.